Expressive Biofeedback Chat

Overview

I designed and carried out a pilot study exploring sharing animations based on biofeedback from a wristband sensor advised by Professor Geoffrey Kaufmann & PhD Candidate Fannie Liu as an independent study.

Project Details

Tools

Sketch, HTML, CSS animations, Bootstrap, Node.js, Socket.io, Smoothie.js, Empatica Android SDK

Methods

Sketching, research planning, interview protocol design, interviewing, qualitative coding

Deliverables

I wrote up my process and findings for this pilot study in a final report for researchers at the eHeart Lab and Oh!Lab in Carnegie Mellon's Human-Computer Interaction Institute here: Pilot Study: Sharing Electrodermal Biofeedback with Customizable Kinetic Type.

I also documented the chat code, which is available on Github with README for setup with two participants.

Background

Expressive biofeedback is the use of physiological data to influence social interactions. In this pilot study, I explored questions of trust, comfort, and expression, with regards to sharing biofeedback data through messages with kinetic typography in a web chat. Using electrodermal activity from the Empatica E4 wristband sensor, six participants monitored their baseline and current electrodermal activity in a chat interface while chatting with a confederate participant who asked emotionally charged questions of a variety of valences.

The pilot set out to explore four central questions:

- Are people comfortable sharing messages with biofeedback data?

- Do people want control over how their biofeedback data is presented to others?

- How do people understand the biofeedback data, as shown through data

- Do people trust that their biofeedback data is accurate?

How the chat works

This project built directly on Raina Langevin’s work for the project Understanding Perceptions of Expressive Biofeedback and Implementing it into a Kinetic Instant Messenger, which created several text animations corresponding to a variety of biofeedback. In the chat implementation which I built for this pilot study, I finished implementing the two-way chat and added animations into an effect library.

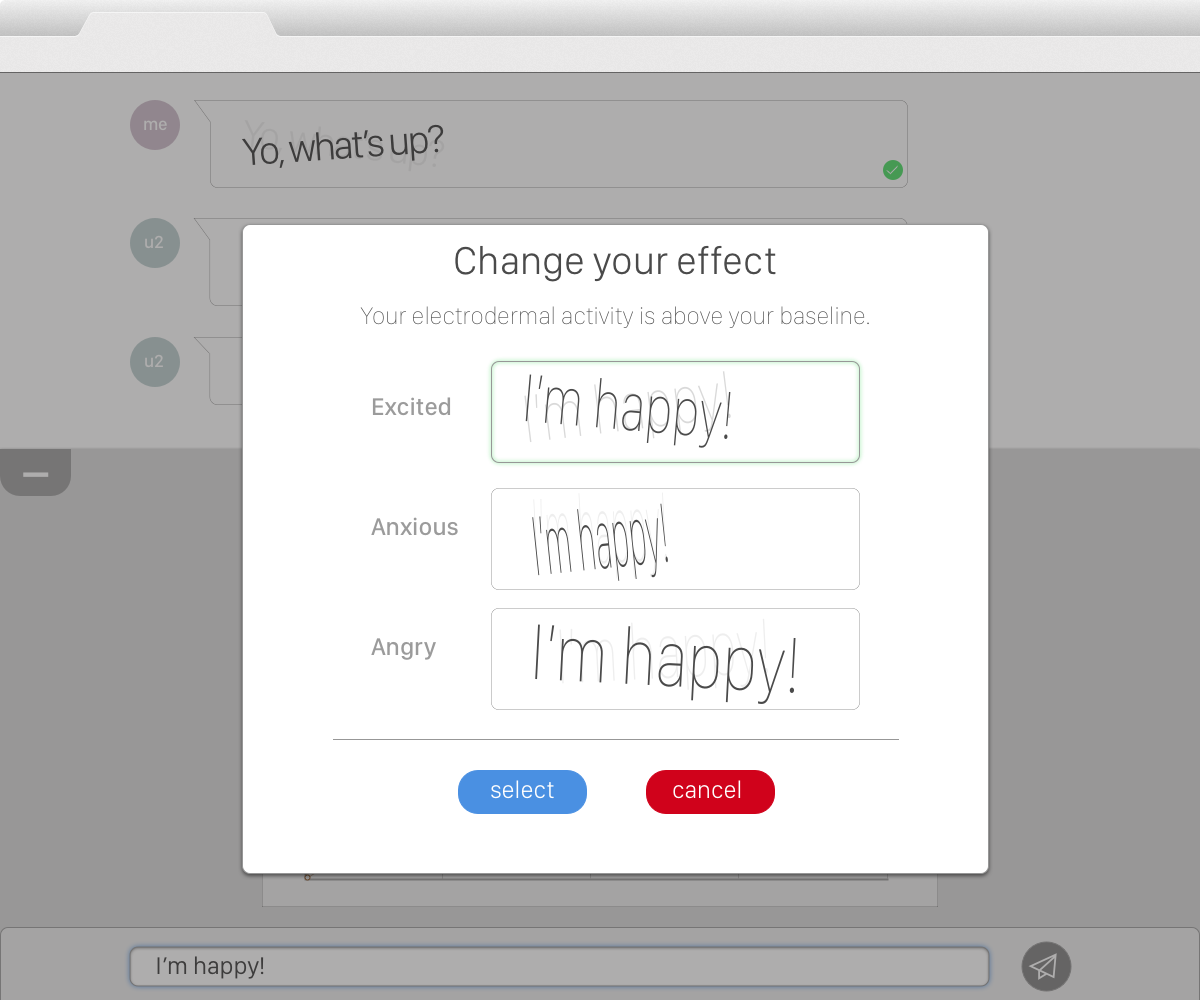

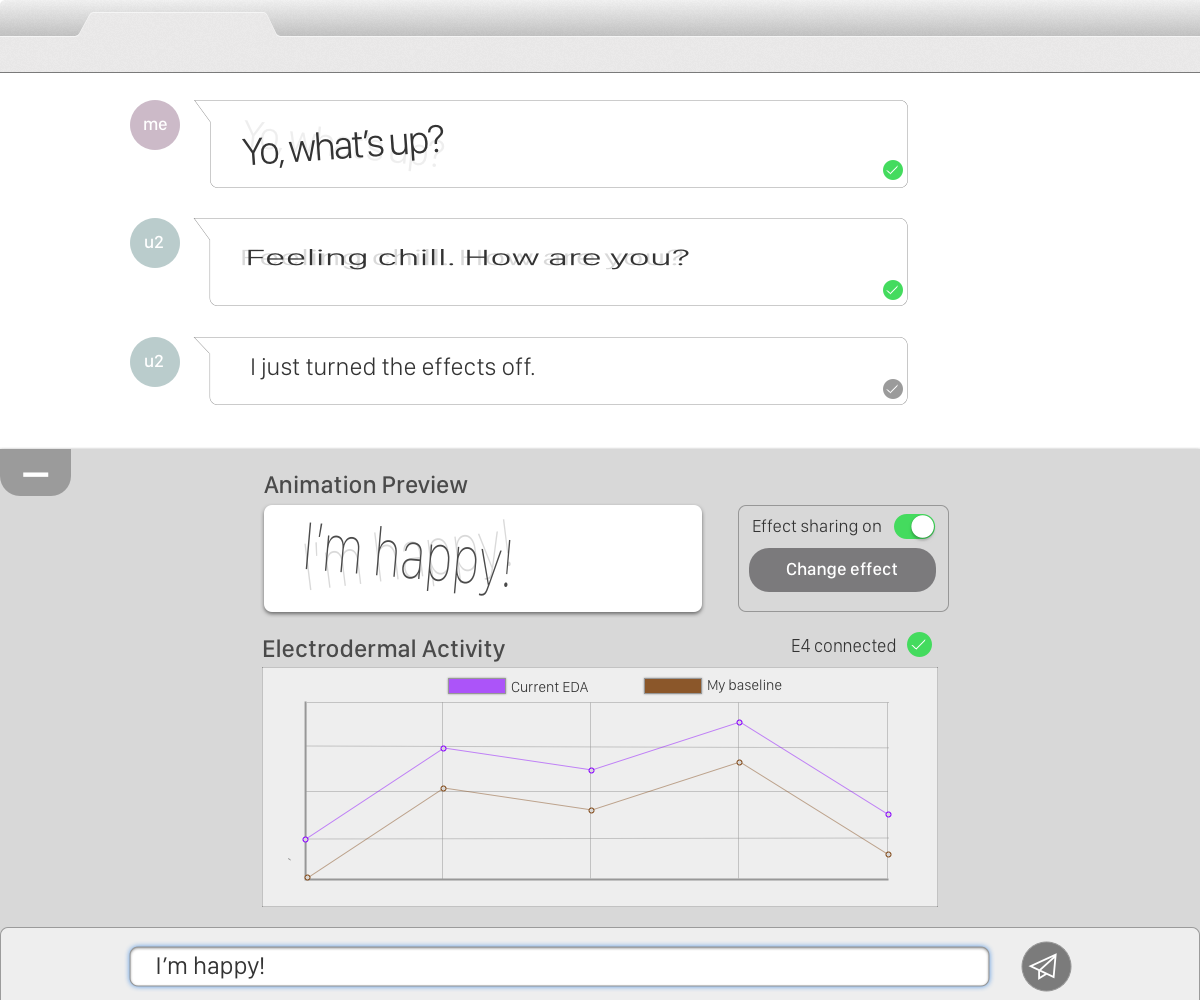

Each participant could choose from a library of text animation effects. As the participants typed, a preview box displayed an animation of their text with a fast or slow speed, as determined by whether the participant was above or below their baseline. While the participant could not control the animation speed, which was determined by their physiological data, they could control the animation style of the effect. In addition to choosing their effect, the participant could also choose whether or not to share with the other person in the chat. Each participant chatted with the experimenter, whose sharing settings were off, and answered a series of positive emotional, negative emotional, and neutral questions.

After sitting with the participant to calculate a baseline of activity, the participant could view a streaming chart of their electrodermal activity, and its status above or below a pre-calculated baseline, at any point during the pilot experiment.

Design Process

After reviewing the finding and codebase for Raina Langevin’s previous kinetic typography work, I sketched out several feature design directions on paper and using Sketch before building on the working prototype with the existing CSS animations. In particular, I explored how sharing settings could be toggled, and considered surfacing animation categories to the participants.

After several rounds of critique to tie designs back to our research goals, as well checking them for technical feasibility, I moved forward to development and research planning for the final pilot design. The testing process and findings are documented in my final report: Pilot Study: Sharing Electrodermal Biofeedback with Customizable Kinetic Type.